Algocracy Special: Fakenews Algorithms and Czech Senate

Explaining automated cyber-propaganda (not just) to politicians

By Josef Holy

About two months ago I had an opportunity to participate in a discussion panel about Fakenews in the Czech Senate together with Frantisek Vrabel, CEO of semantic-visions.com.

The main topic of the whole event were technologies and algorithms powering the current online platforms and their role in the fakenews generation and distribution.

Below is a loose rewrite of my presentation, in which I tried to explain in layman’s terms how algorithms powering those online platforms work.

Fakenews as cyber-propaganda

Fakenews is not someone simply lying on the internet. It is a sophisticated, semi-automated data-driven propaganda, which leverages the Information and Communication Technologies (ICTs).

That’s why we talk about “cyber-propaganda”.

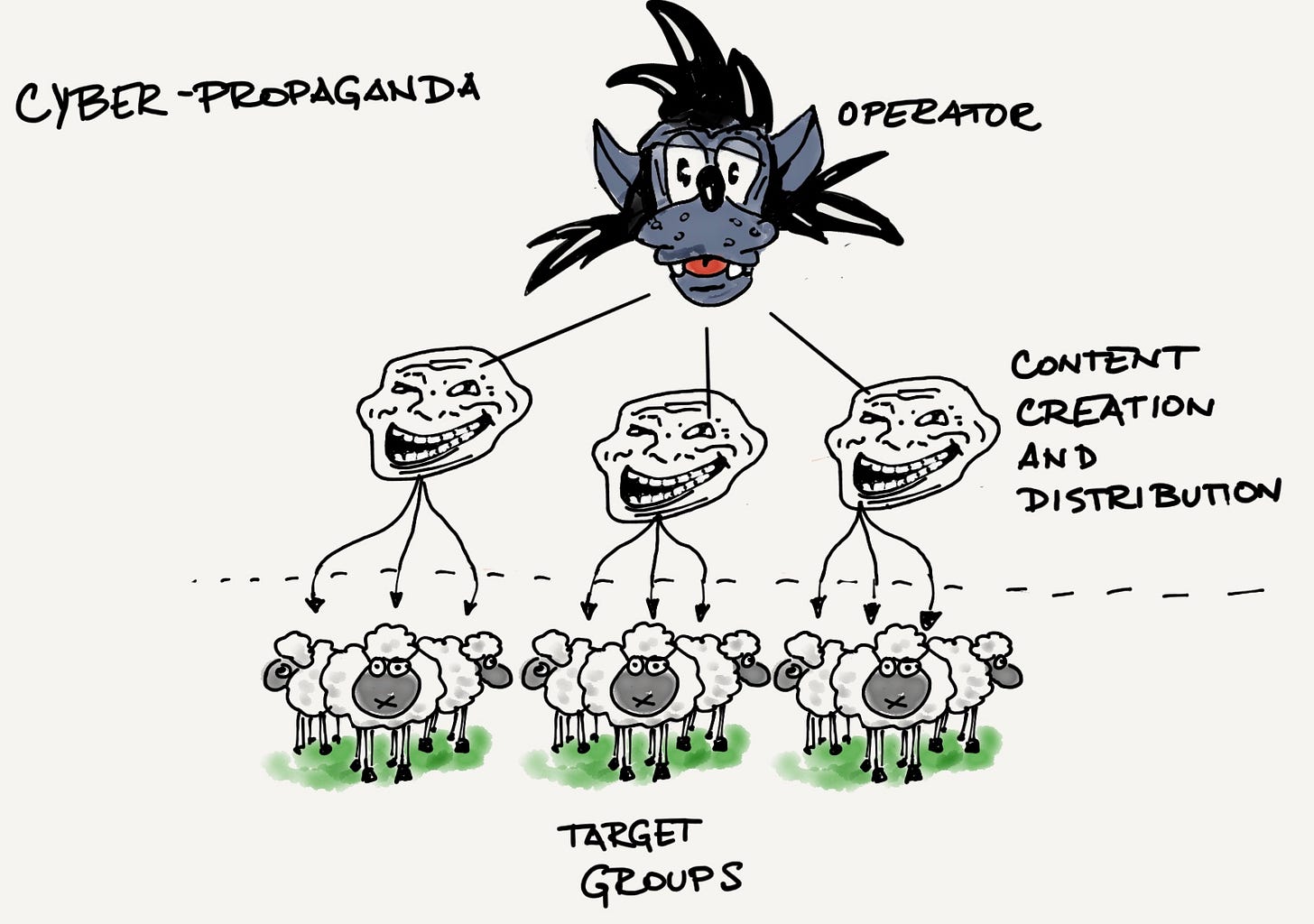

Cyber-propaganda is a manipulation of public opinion towards certain direction by using Information and Communication Technologies.

This report nicely describes how the cyber-propaganda works.

There is always an Operator, pursuing a certain goal.

Think for example russian secret service aiming to polarize public discourse in the Czech republic around the topic of immigration.

To achieve that goal, the Operator designs a specific campaign, which starts with a team of seed users, who develop and test the content - text or video - and push that content into online networks.

Think for example a made-up article describing how Czech Pirate party wants to provide free appartments to all immigrants from Africa, posted onto several dozens websites and links shared in appropriate Facebook groups.

The ultimate goal is to get a snow-ball effect - to get the content spread virally - through regular social media users spreading it by themselves through likes, comments and shares.

The Scale of Algorithmic power

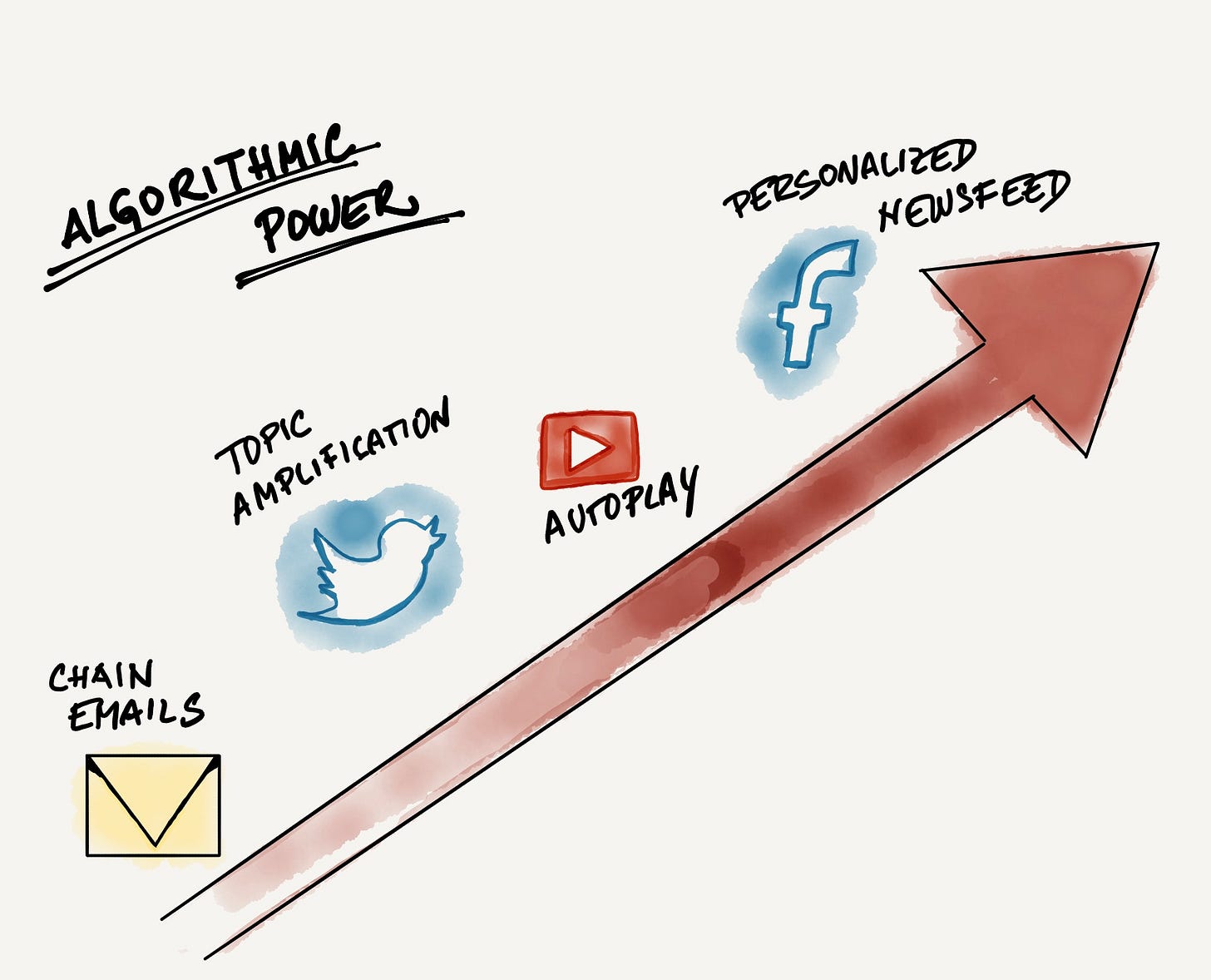

Cyber-propaganda leverages various online content and media platforms for creation and distribution of fakenews.

We will have a look onto the following four, which have currently the biggest impact - Email, Twitter, Youtube and Facebook.

We can sort these four online platforms based on their Algorithmic power, which simply means how much the machines are in charge of content distribution.

Chain Emails: The private social network

Emails are used to spread misinformation mostly amongst seniors, who happen to be less technically savy.

They also demonstrate lower media literacy, mostly thanks to living big parts of their lives in totalitarian communist regime, which had a tight grip on the information flows in the society.

Email represents a decentralized and private social network, where the connections between people are not stored in a database of one service provider (e.g. Facebook) but exist in address books of individual recipients.

Misinformation campaigns then start with sending emails to set of “Users Zero”, who then forward them to other contacts in their address books. Users do forward the content, because they trust the sender they received the email from.

Of course, simple forwarding would make it fairly easy to track down the oririginal content source. That’s why Operators and their teams literally educate their target audience by sending them step-by-step instructions how to send background (invisible) copies (article in Czech).

This effectively makes tracking of the original source very difficult or nearly impossible.

For chain email campaigns, operators leverage most of the traditional email marketing tools and practices, from acquiring users information (email addresses, demography, geo location, etc.), tracking link click-throughs to evaluating “performance” of individual Users Zero and how large is their impact and influence.

Twitter: Shout loud to be heard

While the email chains described above are mostly manual (in a sense that people are the ones who spread the (mis)information through the email network) Twitter is a centralized online social network, which allows semi or fully automated information distribution.

This automation is usually done through so-called Twitter bots and bot networks.

Twitter bot is an artificial (=non-human) Twitter account which semi-automatically tweets, retweets, likes, follows and mentions other users and their tweets.

Individual twitter bots are connected into large networks of interconnected bots (botnets) which can consist of tens or even hundreds of thousands of individual bots.

All of them following, liking and retweeting each other in order to amplify certain tweets or hashtags.

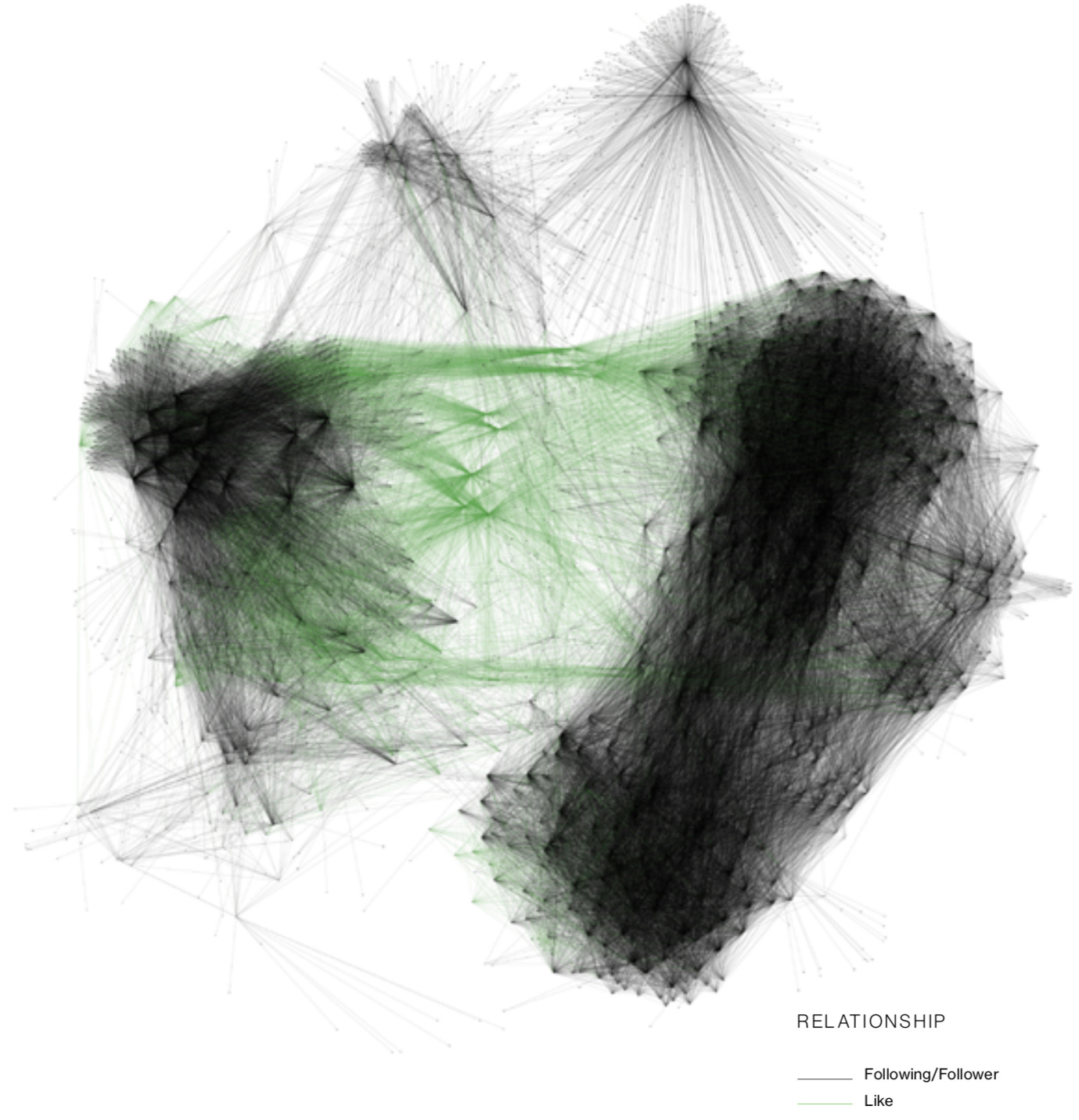

Just look at the scale - visualization of a botnet of tens of thousands of bots. Black nodes are bots, black lines show how they follow each other and green lines show how they Like and Retweet each other tweet to amplify them. Source: duo.com

Botnets are centrally controlled and can be hired for a specific purpose on a black market.

The main purpose of botnets is amplification of tweets and hashtags, because topics and tweets with the most likes, retweets and responses get higher in Twitter search results and can even make it into various trending lists which then further increases their overall reach and impact.

One of the recent examples of this automated amplification is the case of journalist Jamal Kashoggi who got murdered in October 2018 by Saudis.

When the reports about the event started to get traction in the public space, various security researches have observed sudden emergence of trending topics tied to pro-Saudi propaganda.

The goal was simple - to amplify pro-Saudi version of events on the account of the truth.

It is fair to say, that in the recent years, Twitter has made a progress in fighting botnets, but it’s difficult.

Further reading:

Sometimes you don’t need a botnet - Major media for example amplify Trump claims on average 19 times a day

Great essay by Cory Doctorow - The Engagement-Maximization presidency

Youtube: Through Autoplay to radicalization.

While Twitter’s algorithms are mostly concerned with search and identification of trending topics (hashtags), Youtube represents a personalized online service. It tries to cut bigger piece of the attention pie by suggesting videos to watch next to users.

The thing is, though, that Youtube’s algorithms tend to suggest progressivelly more and more extreme (radicalized) content because they have literally learned from viewers behaviors, that people are more likely to spend more time watching more extreme content.

For example - when you start watching a video about space exploration, it is very likely that sooner or later you will be recommended a video questioning the Moon Landing. Hillary Clinton videos will lead to conspiracy theories, etc.

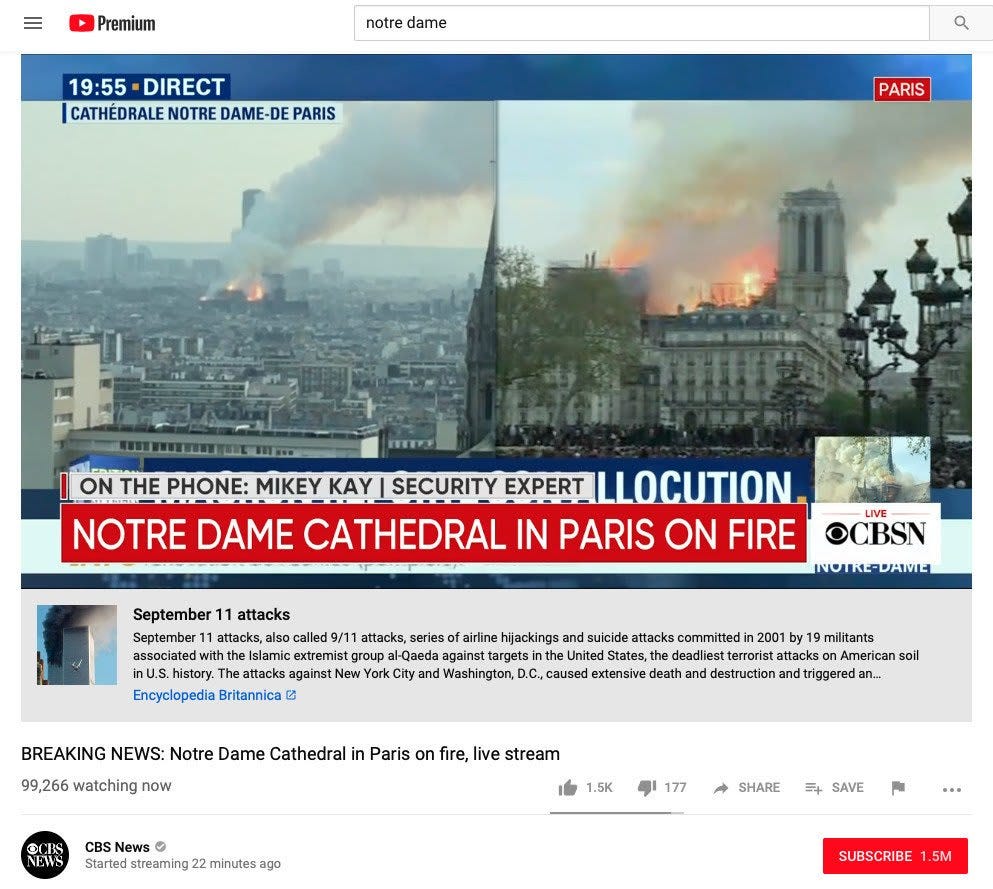

An infamous recent example of Youtube algorithm recommending article about 9/11 attacks from Britannica under the live video feed of the Notre Dame Cathedral fire.

When you combine this algorithmic principle with state of the art SEO (Search Engine Optimization) techniques and tools, it is quite simple to polarize and further radicalize individual population groups by serving them with appropriate content. This is what Russians (and others) have been doing and are really good at it.

Further reading:

Recent NYT article describing a story of a young deprived man turned into right wing radical by Youtube’s algos.

Facebook: Target Bubbles

The largest and the most advanced platform running content distribution algorithms is Facebook. I wrote about Facebook at length in the past issue of Algocracy Special.

My main message for Czech Senators was that Facebook algorithms naturally close people in content bubbles, which get further radicalized by being served appropriate hypertargetted content.

It is OK to hypertarget when you run a marketing platform but it becomes highly problematic, when you mediate access to news and information for billions of people every day.

Further reading:

Previous issue of Algocracy Special: It’s time to unbundle Facebook.

Coming Soon: Deep Fakes - The Perfect Storm of Cyberpropaganda

Algorithms described above drive distribution of content which is still created by humans.

However, we are approaching an era where we will see more and more content generated by machines. It is already starting with textual content (by using Natural Language Generation) and soon enough it will evolve via other content modalities - images, audio - all the way to full videos indistinguishable from reality.

When this happens, then the complete chain of content creation and distribution will be automated. Algorithms will be dynamically creating content personalized for various segments, measuring their impact and constantly fine-tuning the quality.

I call this the Perfect Storm of Cyberpropaganda, because the quality of fake content and precision of its targeting may cause serious disruptions in the society.

Imagine fake videos of corporate executives generated in conjunction with automated trading bots to game the stock market. Or videos of politicians making serious fake statements about critical topics and policies.

Or Mark Zuckerberg becoming a champion of transparency:

The amount and quality of such highly effective content will be so big, that it will be impossible to fight by something like a manual curation. There will have to be other AIs automatically detecting such fake content, but they seem to be playing the catch-up game for now...

Further reading:

Spies have used AI-generated profile pictures to connect with targets over LinkedIn

Final Remarks

After our presentations, there followed about 40 minutes long panel discussion. Czech senators who were present were clearly surprised by the scale and level of automation of the current cyber propaganda.

There was also a shared opinion that there is only a little what they (as MPs of a country, with population equal to the size of a user segment which Facebook tests its new features on) can do to bring platforms to responsibility for their impacts on the society.

We will see how the relationships between governments and Big Tech companies will develop, but the tension is clearly growing.

Further reading:

Canada doesn’t give a damn about how big the Big Tech is - Mark Zuckerberg will face summons once he enters the country.

Good essay about Automatic weapons of social media

A set of whitepapers for policy makers about mitigating the political impacts of social media and digital platform concentration.